Webinar

Alumni Ventures Blockchain Fund Presents A Deep Dive into the Metaverse Roundtable

Alumni Ventures’ Blockchain Fund is focused on the universe of opportunities opened up by blockchain technology. It’s a chance to invest in a diversified portfolio of ~20-30 promising, venture-backed companies innovating in areas like Metaverse, non-fungible tokens (NFTs), decentralized finance (DeFi), and more.

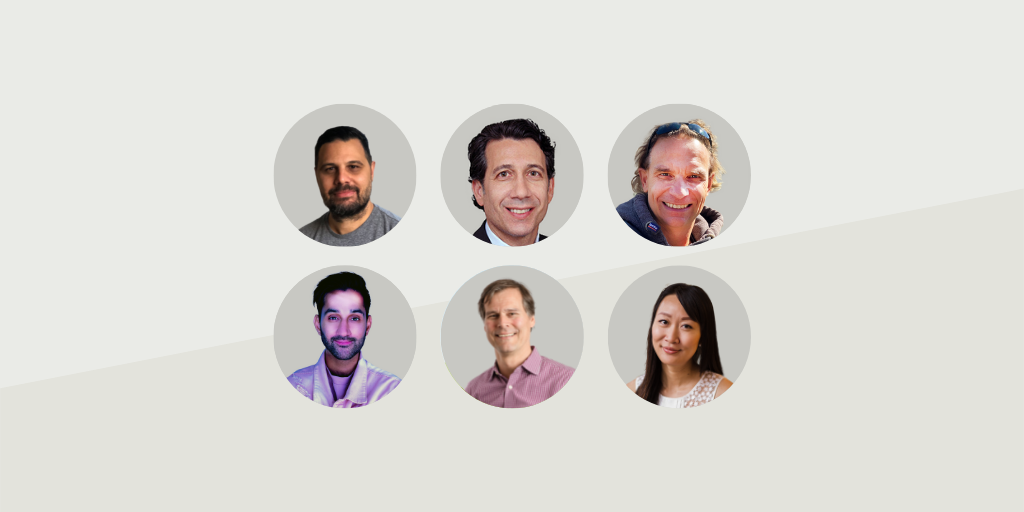

Watch our on-demand snippet of the presentation featuring AV’s CIO Anton Simunovic and AV’s Blockchain Fund Principal Sophia Zhao with guests from Mythical Games, Upland, Pixelynx, and Wedbush. The panel will take a deep dive into trends in the Metaverse, NFTs, gaming, and blockchain.

During the session, we will deep dive into:

- HomeMetaverse

- HomeNon-fungible tokens (NFTs)

- HomeGaming

- HomeBlockchain

Note: You must be accredited to invest in venture capital. Important disclosure information can be found at av-funds.com/disclosures.

Alumni Ventures’ Blockchain Fund will focus on new sectors and ventures using blockchain tech. We will provide investors in the fund with a portfolio of ~20-30 promising, venture-backed companies innovating in areas like decentralized finance (DeFi), the Metaverse, non-fungible tokens (NFTs), and more. This is an actively managed fund led by a three-person investing team, all with deep VC and blockchain experience.

To learn more, click below to review fund materials or book a call with a Senior Partner.

Frequently Asked Questions

FAQ

Speaker 1:

Welcome everyone. We really appreciate you taking the time out of your day to join us for today’s webinar on the latest advances in AI and machine learning, a conversation with CEOs and founders. I’m Matt Caspari, and I’m the managing partner of Strawberry Creek Ventures, which is an Alumni Ventures fund that is focused on the UC Berkeley community. I’m excited to be leading this webinar and moderating our panel today.Before I dive in, I’ll start by reading a few legal disclosures. We are speaking today about Alumni Ventures and our views of the associated landscape. This presentation is for informational purposes only and is not an offer to buy or sell securities, which are only made pursuant to the formal offering documents for the fund. Please review important disclosures and the materials provided for the webinar, which you can access at av-funds.com/disclosures.

And a few quick housekeeping items: You will not be on camera and you’ll be muted throughout the entire presentation. We are recording this webinar and will share it with you after. I encourage you to submit questions throughout the webinar. To ask questions, please enter them through the Questions section of your GoToWebinar control panel and click Submit.

Behind the scenes, we have Taylor, one of our Investor Relations Managers. He’s supporting us and you, and he’ll help me field your questions as they come in. If we don’t get to your specific question today, we’ll do our best to follow up with you via email. And if you’d like to learn more about Alumni Ventures and investing in venture capital, I encourage you to book a call with one of our Senior Partners. They welcome the opportunity to speak with you directly. I’ll have Taylor drop a link in the chat where you can book time on their calendar.

They’ll also include a link to a secure data room where you can explore fund materials, see performance details, and begin the process to become an investor with Alumni Ventures, if that’s of interest to you.

So, moving over to the agenda for today: I’ll start by giving you a brief overview of Alumni Ventures. I’ll then cover a few highlights of what’s been happening in this very exciting space over the past year. The bulk of the time today is going to be the panel discussion and Q&A.

Our mission at Alumni Ventures is to democratize access to the venture capital asset class and make it more accessible for investors and entrepreneurs. Our first fund was around the Dartmouth alumni community, and we’ve grown now to over two dozen funds. Across those, we’ve raised over $1 billion in capital. We have 8,000 investors across our funds and over 1,000 portfolio companies that we’ve been able to back. Alumni Ventures was ranked the third most active venture firm in the world according to PitchBook’s 2021 Global League Tables.

Our approach to venture capital at Alumni Ventures is network-powered venture capital. We build communities around alumni networks, and we’re proud to have grown our network to over 600,000 supporters.

So, to set the stage for the conversation today, I wanted to highlight a few significant events in AI over the last year. We’ve seen powerful image generators like DALL·E and others make vast improvements on text-to-image diffusion models. We saw Meta’s game-playing AI beat humans at an online version of Diplomacy, which is a popular strategy game that is extremely complex.

DeepMind published a paper in Nature showing how they developed an AI designed to perform a type of calculation called matrix multiplication, and it beat a decades-old record for computational efficiency, opening up new paths to faster computing. And I’m sure everyone saw OpenAI’s release of ChatGPT, the first AI language model to get widespread adoption with millions of users.

Looking forward to this year, on December 31st, OpenAI’s president tweeted: “2023 will make 2022 look like a sleepy year for AI advancement and adoption.” So very exciting times.

At Alumni Ventures, in addition to our funds that are focused around alumni communities, we also have several thematic funds. One of those is our AI Fund, which has developed an extensive and diversified portfolio of investments covering various aspects of AI and machine learning. The logos here show some of the companies that we’ve had the pleasure of backing at Alumni Ventures, and I’m thrilled that the CEOs from three of these companies are joining us today on our panel to discuss the latest advances in AI and ML.

Panelists, if you could please turn on your webcams and mics, we’ll have you join us up here. Great. Good to see you all, and thanks for joining.

We’ll start the conversation by having you share a little bit about yourself and also giving us a high-level overview of your company. Emk, I’ll have you kick us off.

Speaker 2:

Thank you, Matt. Great being here. Great being part of the Alumni Ventures family.I’m Gultekin, the co-founder and CEO of C. Basically, what we do is we’re a computer vision company, and the big idea has been trying to copy human visual intelligence and comprehension into machines. That has been the challenge for all of us in this process, and we’ve been able to move the inferencing and the model training to a certain level.

So we’re a complete computer vision AI lifecycle company. We do dataset generation annotation, we do model training, we do inferencing, we do analytics, and we do MLOps with that. Great to be here and great to talk about what’s happening in AI today and how it’s going to change the future.

Speaker 1:

Excellent. Thank you. Yar?Speaker 3:

Thanks for having me, Matt. Great to hear you on this panel, and also appreciate the support of Alumni Ventures—very excited.I’m Yes Vadi, I run Synthesis AI. We’re a San Francisco-based company focused on pioneering synthetic data to help build more capable and ethical computer vision models.

All AI is dependent on the data you feed it, and we’re creating an on-demand simulation platform to allow you to create diverse, realistic worlds and enable you to build models in a very different way than today.

We use a combination of generative AI approaches combined with traditional visual effects technologies to create these immense worlds. I’m very excited to be here and to talk about the latest advances.

Speaker 1:

Great. I appreciate it. Or?Speaker 4:

Yeah, thank you, Matt, and thanks for having me. Hello everyone.I’m Or Netzer, co-founder and CEO of Data Heroes. What we’re doing is building a library for data scientists that helps them solve some of the biggest challenges today in machine learning.

This includes reducing the time, effort, and resources required to develop and maintain high-quality machine learning models.

We do this using a unique methodology that allows us to substantially reduce the size of a dataset to a smaller subset that still maintains the statistical properties and corner cases of the full dataset. This means that all the data science operations they need to do on a really large dataset can be done on the smaller subset we create—and they’ll get the same results.

So everything becomes a lot faster and easier. Cleaning the data, which is a major effort, becomes easier because there’s a lot less data to clean. Training and tuning the model becomes faster because there’s less data for the model to compute. Updating and maintaining the models in production becomes more cost-effective because it’s cheaper, compute-wise, to do it with less data.

This week we actually released our first beta version, so we’re excited about that.

Speaker 1:

Awesome. Excited to have all of you. Again, appreciate it.Prior to the webinar, we prompted ChatGPT to come up with a list of questions, so I’ll start there. I’ll also weave in some of the questions Taylor is receiving through the GoToWebinar chat. So again, if you have questions, please do enter those. We’ll get to as many as we can.

Starting out, at a very simple high level—what is AI? Emk, I know you have a 10-year-old. How would you explain AI to a 10-year-old?

Speaker 2:

Thanks, Matt. This is the ultimate challenge, isn’t it? I don’t think we’ve done a really good job of simplifying that message. There have been oversimplifications by saying things like “putting a brain in a machine,” but that’s really not the step function we’re talking about here.I think we’re many, many decades away from that. The same thing happened in history when the ENIAC came out in the 1940s—people said it was like a big brain. It actually weighed 27 tons, and now you have watches that are a thousand times more powerful than the ENIAC.

So the brain analogy is a bit flawed, but it does work with kids.

So when you say, “Oh, it’s like putting a brain in the machine,” they automatically understand that. Although that’s not really what this is about, I try to demonstrate it with apps immediately. I have some practice with my kids and their friends trying to explain what we do. What we do as a company is we teach computers what to see, really.

We have an IC2 app that basically scans live videos and images and populates detections of what it sees, so it understands contextually what’s happening. That provides some understanding and demonstration of what’s happening. Recently, more apps have come out—obviously ChatGPT and others—where you can demonstrate what’s happening.

For kids, I think demonstration is important. I think it’s important for everybody to be able to demonstrate what’s happening. I don’t think we’ve done a really good job on the demonstration piece. It’s been kind of weaved in abstraction.

You say things like, “We teach computers how to see.” It’s like playing a video game and you have the other person—the computer—playing against you. But at the end of the day, I don’t think we’re at the step function where you can say it’s analogous to a brain yet. We’re all coexisting here—different people working on different components of this problem.

There are lots of different segments of AI as well. NLP is a huge segment. Computer vision is a huge segment. You have a dataset generation, which is a totally different segment, and so forth—voice, and many others. By the time all those things come together, I think we’re very far away from that type of “brain” analogy.

I hope that answers some of the questions. [laughs] Yeah, at least a little bit.

Speaker 1:

That’s great. If other panelists have any other insights… A somewhat related question that came through is: You mentioned machine learning as well. What’s the difference between AI and machine learning?Speaker 3:

Maybe I’ll just build on Emk’s analysis of AI. For me, it’s around algorithms meant to mimic human capability.What we’re realizing as we continue to progress in AI is that humans are pretty smart. They’re very good at doing a lot of things that machines can’t, and in certain domains, machines are way better than what we can do.

We try to mimic what the human brain can do. I think there are multiple layers. The way I think about it is:

- Multimodal perception: vision, speech, text. As Emk pointed out, these come together for perceiving the world.

- Understanding: using those inputs to understand the world.

- Reasoning and navigation: humans are good at navigating and manipulating environments. We see that in robotics.

- Generating/creativity: this was thought to be the hardest capability to achieve, but generative AI emerged last year and progressed tremendously.

So when we look at the holistic capabilities of humans, the aspiration is to mimic some of those—and eventually move beyond human capability into superhuman capability, albeit in more narrow domains for now.

Speaker 1:

Great. In the opening presentation, I covered a few of the advances that have happened over the last year. It feels like things are moving extremely quickly. We’ve seen a ton of interest.What development did you find most exciting? Maybe Or, I’ll loop you in.

Speaker 4:

Absolutely, thank you. Probably generative AI has been the most exciting development this year.First, the quality of the models has improved to a level that has impressed everyone. Even people deep in the AI space have been amazed at the advancements of these models.

ChatGPT is one example, and DALL·E is another. There are additional examples of really cool generative AI models and large language models. I actually read this week about another LLM called Claude that passed the bar exam—which is pretty impressive.

There’s been a huge advancement this year, and these models will continue improving next year. Importantly, people now finally see and understand how AI applies to their everyday lives. That wasn’t as visible to non-technical folks until recently.

Speaker 1:

Yeah, that’s great. Emk, are there any developments that really caught your attention?Speaker 3:

Sure. Maybe I’ll talk a little about the technological basis that powered generative AI last year.There’s been a mix of slow and fast developments over the past year. Generally speaking, computer infrastructure and capability has improved, and access to data has broadened. The bottleneck was always the model.

In 2017, a phenomenal seminal paper introduced transformer architectures. For the first time, this allowed for parallelizable computation and large, effective memory through positional encoding and self-attention networks. That opened the capability to process a huge amount of data in a compute-efficient way, powering GPT and similar models.

So, technologically, these things have been built. Transformers really accelerated that.

To Or’s point, what we saw last year was a wave of consumer applications of these technologies. These have been embedded into health sciences and deeper tech stacks for years—less visible but impactful. When you can start talking to a ChatGPT interface, it captures public imagination and excitement.

Another dynamic last year was competition. Stability AI open-sourced foundational models, which became a catalyst for OpenAI to be more aggressive with productization and bundling of solutions, responding to open-source momentum already spreading.

We’re now seeing an interesting ecosystem of players emerging. This competition will continue accelerating in 2023 as they vie for the hearts and minds of both consumers and AI practitioners.

Speaker 1:

Yeah, absolutely. For myself—we’ve invested in Yar’s company, Synthesis AI, so we’ve been active in this space for a while. But just the momentum among friends and other people not active in AI has been pretty wild over the last year.Maybe diving a little deeper, I’d love to hear how you think through the technology stack. How would you explain that to our audience?

Speaker 2:

Yeah, I can take the first hit on this. There was a question about the difference between AI and machine learning. Obviously, AI is the umbrella term for all of this, but machine learning is the technique of how you actually train models—create datasets and train models.In our pipeline, the critical AI part is kind of threefold. We have deep learning frameworks, and that’s a critical part of what we do—having a deep learning framework. Then we also have neural nets as well, which are also an important aspect of creating the model.

So, we have the deep learning framework like PyTorch or TensorFlow, and then you have the neural nets, which are the algorithms that create the model—things like YOLO or ResNets, and so forth. After that, you have the rules-based analytics. After you train a model, you might embed rules into the model, or you might have rules outside of the model.

So, we kind of have a threefold stack that’s a critical part of the machine learning portion. But it’s much more than that because, for us in computer vision, there’s also an added element of complication with video.

Video is very challenging because it has large data gravity, meaning these are very large files that you need to handle with care. These large files have to be transported or inferenced at a certain place. There’s a lot of decoding and encoding going on with videos, which isn’t AI per se, but it’s an important element of the stack.

So, I would say those are the main parts for us. Obviously, you have all the normal coding with Python and frontend/backend stuff—that’s just standard—but then you have the deeper AI and machine learning parts in these major fields of deep learning and neural nets.

Speaker 1:

I would… oh, please, jump in.Speaker 4:

Yeah, I was going to say I think this is a good description. I would add data management as becoming increasingly important as part of the stack. This includes acquiring or collecting the data you need to train the model, labeling or annotating the data, cleaning the data, and making sure you have the right, accurate data.The quality of the data itself has a huge effect on the quality of the model that you end up with. So, I think data management is an important piece of the stack that’s becoming more and more critical.

Then there’s the MLOps piece, which is actually keeping the models running and up to date in production—a big challenge by itself.

Speaker 3:

Yeah, I’ll double-click on Or’s point around data.Historically, when you train these systems in a supervised way, you need the input data and the labels—the things you’re trying to predict or understand from the scenes. For simple tasks in computer vision like “is there a cat or a dog in the image,” that’s easy. But if you need something more complicated, like segmenting every object in the scene at a pixel level, labeling becomes inefficient, costly, and a bottleneck.

In more complex environments—like autonomous vehicles, robotics, or AR/VR and metaverse applications—there are many things you want to understand from the scene that human annotation can’t provide. Examples include the distance to an object, its material properties, complex interactions, or multimodal imaging like LiDAR or NIR.

The complexity of obtaining and labeling data is immense. That’s why many people are realizing that data operations—how you manage and collect data, supplement it with synthetic data, and generate the right data—is going to be key for ultimate performance.

There’s also an ethical layer here: If you’re using human-centered data, you need to ensure privacy is preserved and that you have the rights to the data. If your dataset is imbalanced—lacking equal representation of certain classes (with humans, this often relates to demographics)—you’ll have bias in your model results. That could mean underperformance or differentiated performance for certain groups, which has big ethical implications.

At Synthesis AI, we try to address that explicitly with our solution. With synthetic data, you can preserve privacy and minimize bias. But overall, data is what it’s all about.

Speaker 2:

Yeah, I’d add to that with an example.There’s no model we’ve taken into production without synthetic data for the past three years. We’ve been using synthetic data that long. The generative AI piece currently has a lot of hype on the consumer side, on the frontend side.

But the real benefit has been for training AI models. That’s where generative AI shines—helping us balance datasets. Historically, we thought we could just collect data. The entire ecosystem believed that, but there simply isn’t enough data representation to do this with real data alone.

So, we seed the models with real data, then generate synthetic data. The result is a very robust dataset that allows you to train and take models into production quickly. The data part is critical—it’s the beginning of the pipeline.

Speaker 1:

Yeah, and you’re starting to touch on this. Maybe I’ll ask the question more explicitly:What about the business opportunity in AI? Where do we think value is going to accrue across the technology stack? How does it differ for large enterprises versus startups?

I’m an investor, and I’m sure there are others on the call—so where’s the investment opportunity across the stack?

Speaker 3:

Historically, AI has been transforming many solutions over time—bringing efficiency, automating tasks, and creating value across organizations.Foundational models have changed the landscape a bit, so I’ll double-click there. Foundational models from providers like OpenAI, DALL·E, Stability AI, and others are trained on massive amounts of data with huge compute costs. They’re essentially untouchable for most organizations.

So, what’s the business model for companies in this context? It comes down to proprietary data and fine-tuning. If you’re a company with a lot of customer reviews, specialized legal data, or deep medical datasets, fine-tuning foundational models allows you to build differentiated, verticalized applications that are highly valuable.

Across the stack, we’ll likely have only a few foundational model players—similar to cloud providers. Everyone will sit on top of and leverage those.

Value (and money) will flow to:

- Foundational models

- Verticalized solutions

- The infrastructure providers—chip manufacturers and cloud providers—powering these applications.

Speaker 4:

Yeah, absolutely. I think, first of all, we’re just scratching the surface. There’s still a ton of opportunities. I think there’s been a lot done in the MLOps space in the past few years, but we’re still far from having good solutions for maintaining, updating, and managing models in production. So, I think there’s still a significant opportunity there.And back to what we mentioned before—the data management piece—I think there’s a very significant opportunity there as well. Companies are starting to realize the importance of it, but there really aren’t a lot of solutions out there. I think there’s a big opportunity in data management overall.

Speaker 2:

Yeah, excellent. Just to add to that, I think the value is really in scalability. We’re still seeing scalability issues. We have some foundational models, but you can’t really fine-tune them for your own use cases very quickly because these models are very, very large. You need to create submodels that attach to them somehow.That’s being done by some companies, but we still face two big challenges: scaled inferencing and MLOps in a meaningful, production-level way.

In terms of value to the enterprise, what we’re seeing is that C-level and upper management—even middle management—are very outcome-oriented. They want very simple outcomes, which are complex to achieve but easy for them to visualize and understand.

We focus on the analytics part of it: what do you do with all this data coming through now? We’re generating inference every six milliseconds from thousands of video streams simultaneously. That’s a lot of big data.

You need to create alerts and insights for clients. So, it’s very analytics-driven. It’s a known issue—not just an AI issue—but this new structured data generated by inferencing is where the real value is. This data can be used for insights, alerts, and even monetized.

At the enterprise level, that’s where the business value lies. For us as practitioners, we care about how to actually get there. We’re still wrestling with pipeline challenges, like how to build out foundational models, among others.

At the end of the day, the business owner or buyer wants very, very simple outcomes. For us, getting to that simplicity has been challenging. It’s like answering the “explain it to a 10-year-old” question—but in a different way.

Speaker 1:

Alright. Let’s get even more specific. I’d love to hear how each of your companies is using AI. Who’s your customer? What do you do for them, in simple terms, to improve their products or services?Anyone can jump in. Yashar, do you want to kick us off?

Speaker 3:

Sure. We focus on the data side. Anytime you’re training computer vision models, you need representative data that’s diverse and perfectly labeled. As I described earlier, that’s very hard to get.Take a use case like autonomous vehicles: you want the model to be robust if a child runs in front of the car. Obviously, you can’t go out in the real world and have kids run in front of a car to capture that data.

With next-generation use cases, you need to understand the world at a very deep level. That means having expanded label sets around 3D landmarks, material properties, and complex interactions. Those enable the next generation of computer vision models.

We focus on the data problem—allowing teams to use our platform to simulate the world with enough photorealism and diversity to train models virtually.

The analogy is: in any other engineering discipline, you use CAD or design tools to reproduce reality with enough fidelity to rely on it for building systems. That’s now happening in computer vision.

As we reproduce object physics, material properties, and environmental complexity, we can train systems virtually. This is 100x faster, costs 100x less, allows quick iteration, and lets you test system designs before deploying expensive hardware in the real world.

So, we’re solving the data problem: taking all the complexity of deploying hardware in the field, capturing data, and having humans annotate it—and turning it into a simple API call. Use the API, get the data you need, and build your system.

Our customers are large computer vision companies or teams within Fortune 500 firms. We focus on several verticals around human-centered systems: we’ve worked with handset manufacturers, autonomous vehicle companies, AR/VR firms, aerospace, and more.

We’re building what is sometimes the backbone to train computer vision-based platforms.

Speaker 1:

Excellent. Or, I’d love to hear more about what you guys are doing and who your customers are.Speaker 4:

Absolutely. Our end user is the data scientist, and our library helps them throughout the full lifecycle of developing their model and managing it in production.Here’s how:

- Dataset Reduction:

We reduce the size of their dataset, which helps them prioritize cleaning and improving data quality. Our library identifies incorrect labels or out-of-distribution issues in the dataset. They can quickly resolve these issues and improve data quality. - Faster Model Training:

After cleaning the data, they can train their model much faster because they’re working with significantly less data. Often, cleaning and retraining happen in a loop: clean some data → retrain → clean more → retrain—until they reach satisfactory model quality. - Hyperparameter Tuning:

The next phase is hyperparameter tuning, where they run many iterations with different hyperparameters to see what gives the best result. This is also much faster with our library because of the smaller dataset size.

Overall, the development process is much quicker, and they end up with a better-quality model.

- Managing Data Drift in Production:

A big issue in production is data drift: over time, models become less accurate because new data differs from old data. To fix this, you’d normally retrain your model on old plus new data. That’s expensive and time-consuming, and the dataset grows linearly over time.

Our library solves this by updating the subset of data used to train the model with new incoming data, without growing linearly. The subset size grows very slowly, allowing them to retrain models quickly and frequently.

This helps data scientists from start to finish: developing, training, and managing their model in production efficiently while avoiding data drift issues.

Speaker 3:

Yeah, last year was awesome. Being in the AI space and seeing the consumer appreciation for these technologies was exciting. A couple of innovations and competitive dynamics we touched upon earlier will continue to drive the ecosystem this year.The foundational models and the competitive dynamic between the open-source community—like Stability—and the established players will continue to shape the field. The dynamic that’s super interesting is this: there were maybe 10,000 AI practitioners in the world—not a big number—but now that these foundational models have opened up, and with AI accessible via API, you now have millions of software developers who can tap into these capabilities.

What does that mean? It means a huge shift in how solutions are stitched together and the new specialized, vertical applications that will emerge. I think we’ll see an explosion of capabilities and functionalities as this giant ecosystem gets activated.

The other thing is the interface. Interfacing with AI models used to be complex—you had to be a specialist. But now you have things like ChatGPT, which gained millions of users in just a few days. This creates powerful feedback loops: the community helps improve the underlying system, and those network effects will make these foundational models improve at an accelerating rate.

Speaker 1:

Excellent. Or, your thoughts?Speaker 4:

Yeah, thanks. I think last year was definitely transformative for AI. For 2023, I’ll be watching a few developments closely.First, generative AI—we’ve only seen the tip of the iceberg. Beyond text and images, I expect significant breakthroughs in areas like video generation, multimodal models, and more realistic synthetic data.

Second, responsible AI and governance. With the widespread use of these models, regulation, privacy protections, and ethical frameworks will become critical. How organizations handle data, model transparency, and fairness will have a big impact on adoption and trust.

Lastly, I’m interested in AI democratization. As foundational models become more accessible, small and mid-sized enterprises—and even individual developers—will be able to leverage AI in ways previously only possible for large tech companies. This democratization could unleash a wave of innovation across many industries.

Speaker 1:

Great insights from everyone.Thank you to all our panelists for sharing your perspectives on AI’s rapid evolution, technical and business opportunities, ethical considerations, and future developments.

And thanks to our audience for joining us today. If you’d like to learn more about investing with Alumni Ventures, don’t forget to check the links we’ve provided for booking a call or registering for our upcoming AI Fund webinar.

We look forward to seeing you in future sessions.

Speaker 3:

And then finally, I think “text-to-everything” is what I predict for next year. We already have text-to-image in our space, but I think we’re going to see text-to-3D, text-to-video—you’ll see this really interesting set of capabilities being unlocked, which will change a number of industries.If you look at the TAM (Total Addressable Market) for foundational AI models, it’s essentially all human productivity. The TAM is enormous. Obviously, how you productize that, build a business, and develop sustainable models is still to be determined, but I think that’s where the real excitement lies.

Speaker 1:

Yeah, love it. Or, let’s wrap this up here. What are you looking forward to?Speaker 4:

Yeah, I agree with everything Emma and Yeshar said. I think 2023 is indeed going to blow 2022 out of the water, and it’s exciting to see all these developments happening.One more thing I’ll add—this is a bit more backend-focused, probably less exciting, but I think it’s very important. Over the past few years, we’ve gotten used to more data, bigger models, and more computers. It felt like there was no end to how much compute you could throw at AI.

But that’s starting to change. The current economic situation is part of it. Companies are realizing they can’t just keep spending endlessly; they’re focusing more on efficiency.

Microsoft mentioned this in their earnings release a few days ago regarding Azure Cloud. AWS also spoke about it in their earnings report. So I think there will be a bigger focus on:

- How can we make AI development more efficient?

- How can we reduce the carbon footprint of all this activity?

- How can we reduce costs while maintaining performance?

It’s less flashy than building bigger models, but it’s going to be critically important for companies this year.

Speaker 1:

Got it. Makes a lot of sense.Excellent. I’d like to thank everyone in the audience for joining us today. We really appreciate you taking the time, and a very special thank you to our panelists for sharing their valuable insights and experiences.

About your presenters

15 years of traditional mass market game development experience on some of the most prolific franchises in the industry: Club Penguin, Call of Duty, Skylanders, World of Warcraft. Rudy co-founded Mythical Games in 2018 to bring blockchain and NFTs to mass market games with the belief that true ownership of digital assets, verifiable scarcity, and integrated secondary markets would be the future of games.

Marc Lewis is a Co-Head and Managing Director of TMT Investment Banking at Wedbush Securities in San Francisco. He has over 25 years of focused technology investment experience, and has worked as a portfolio manager at some of the most prestigious hedge funds in the industry. Most recently, he was an investment banker focused on the software sector at BTIG. Previously, he managed TMT portfolios at Citadel, Millennium and Pequot Capital and began his portfolio management career at SAC Capital in 1998. Marc has an extensive network of public, private, and strategic software investors that he has accumulated over the years, and brings a very unique perspective to the world of investment banking. Earlier in his career, he held sales and marketing roles in the software industry, which ultimately shaped the foundation of his sector knowledge. Marc graduated from the University of Michigan and received his Bachelor of Arts degree in Organizational Behavior.

Dirk is a serial entrepreneur and an early adopter of blockchain and related technologies, based in Silicon Valley. He co-founded European and US-based companies in the FinTech and digital media spaces, including the Financial Times Deutschland and Forbatec which has been acquired by SunGard (today NYSE:FIS). Dirk mentored over 30 startups through his work at international startup accelerators in Silicon Valley and is a frequent speaker/panelist focusing on topics about the metaverse, blockchain, and platform economics. He has studied Business Administration in Frankfurt and Paris and received a Ph.D. from the European Business School in Germany where he wrote his doctoral thesis about private and state-controlled currencies.

Inder Phull is the founder and CEO at Pixelynx, a new venture that is building the music metaverse with iconic music industry partners; deadmau5 and Richie Hawtin. The company is focussed on blurring the lines between music, blockchain and gaming through a mobile application built on the Niantic Lightship ARDK as well as a desktop game that will be released in Q3 2022.

Anton has 20+ years of technology experience as a proven venture capital investor, entrepreneur, and operating executive in companies ranging in size from startup to Fortune 10. Most recently, he founded and led Vener8 Technologies, a technology commercialization company he started with GE. Previously, Anton led the Software and Internet Infrastructure Group at GE Equity, where he directly invested $72 million in 10 companies generating more than $500 million of realized gains. Anton has substantial international experience in Canada, China, Europe, and Israel, and has served on the board of directors of more than 20 private and public companies. Anton has a BSc Engineering from Queen’s University in Canada and an MBA from Harvard Business School.

Sophia dove into the exciting world of Crypto in 2017 and started her crypto career with Galaxy Digital’s Advisory team, supporting a portfolio of blockchain startup clients with investor relations and Initial Coin Offering (ICO) initiatives . She continued building her crypto deal flow and book of investor contacts during her tenure at Huobi US and Crypto.com’s exchange, working with institutional clients such as crypto hedge funds, high frequency traders on market-making, and volume trading initiatives, covering the Americas, EU and Latam regions.

Sophia is actively plugged into different protocol ecosystems and accelerators. She judges ETHGlobal and Solana’s hackathons, mentors at Algorand’s Accelerator and Berkeley’s Blockchain Xcelerator, and governs Harmoney’s Incubator DAO as a governor.

Sophia comes from a background of business development and corporate development, having worked with CXOs and Entrepreneurs on their financing and business strategies. She holds a BBA from Simon Fraser University, an MBA from University of British Columbia, and an MAM from Yale School of Management.